Are Agent Orchestration Frameworks Doomed in the Singularity age? A Wake-Up Call for 2026 Builders

As Grok and Other Frontier Models Master Native Long-Running Orchestration, Investing Heavily in Rigid Tools Like LangChain, LangGraph, Haystack, and CrewAI Risks Becoming a Costly Detour

January 2026 marks a major tipping point as AI enters Singularity. It was declared by the Moonshots podcast crew at the end of 2025 and confirmed by Elon Musk in the first week of 2026.

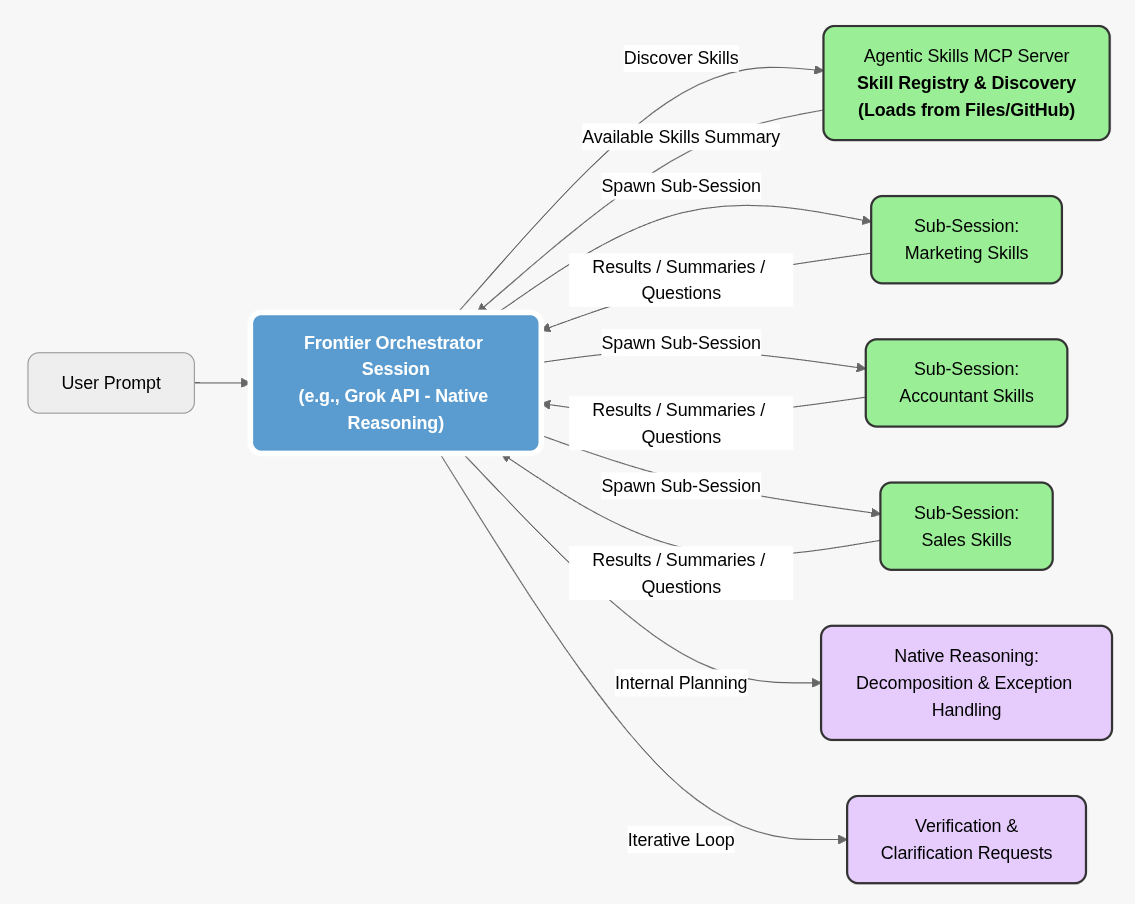

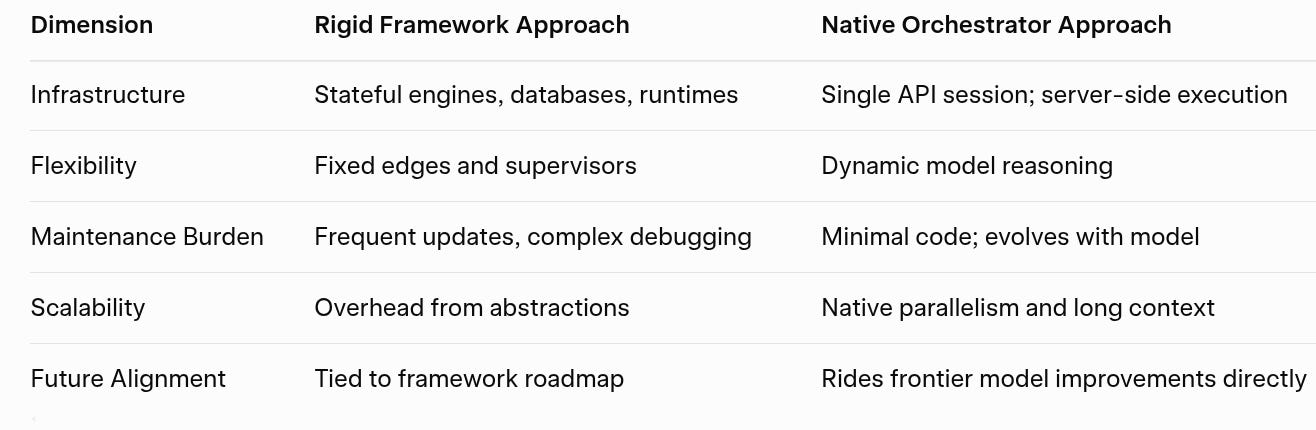

Frontier AI models are evolving so quickly that capabilities we engineered elaborate scaffolding for just a year or two ago—complex planning, parallel tool execution, exception handling, long-running sessions—are now emerging natively. Grok’s server-side MCP tool calling, for instance, already supports autonomous loops with parallel sub-agent delegation and iterative clarification, all without external graph runtimes.

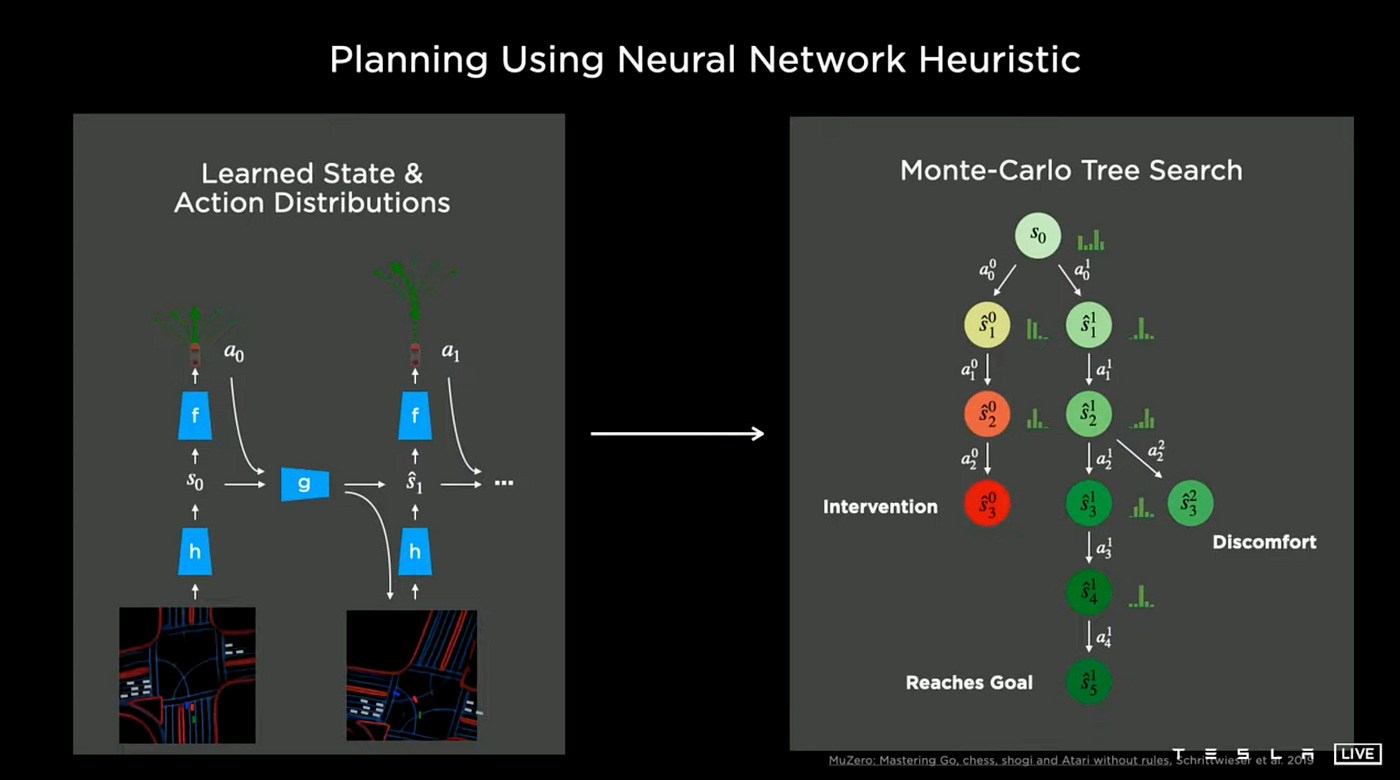

If you’re spending weeks mastering LangGraph’s stateful nodes, CrewAI’s hierarchical supervisors, or Haystack’s pipelines, it may be time to pause. The trajectory suggests these rigid, heuristic-heavy frameworks—designed to corral less capable models—could soon constrain more than they enable, much like hand-written rules hindered Tesla’s Full Self-Driving progress.

This article explores that trajectory through Andrej Karpathy’s prescient lens, real-world parallels, and a simpler alternative: natural language playbooks powering a single frontier orchestrator session.

Karpathy’s Software 2.0 Thesis: Accelerating Into Reality

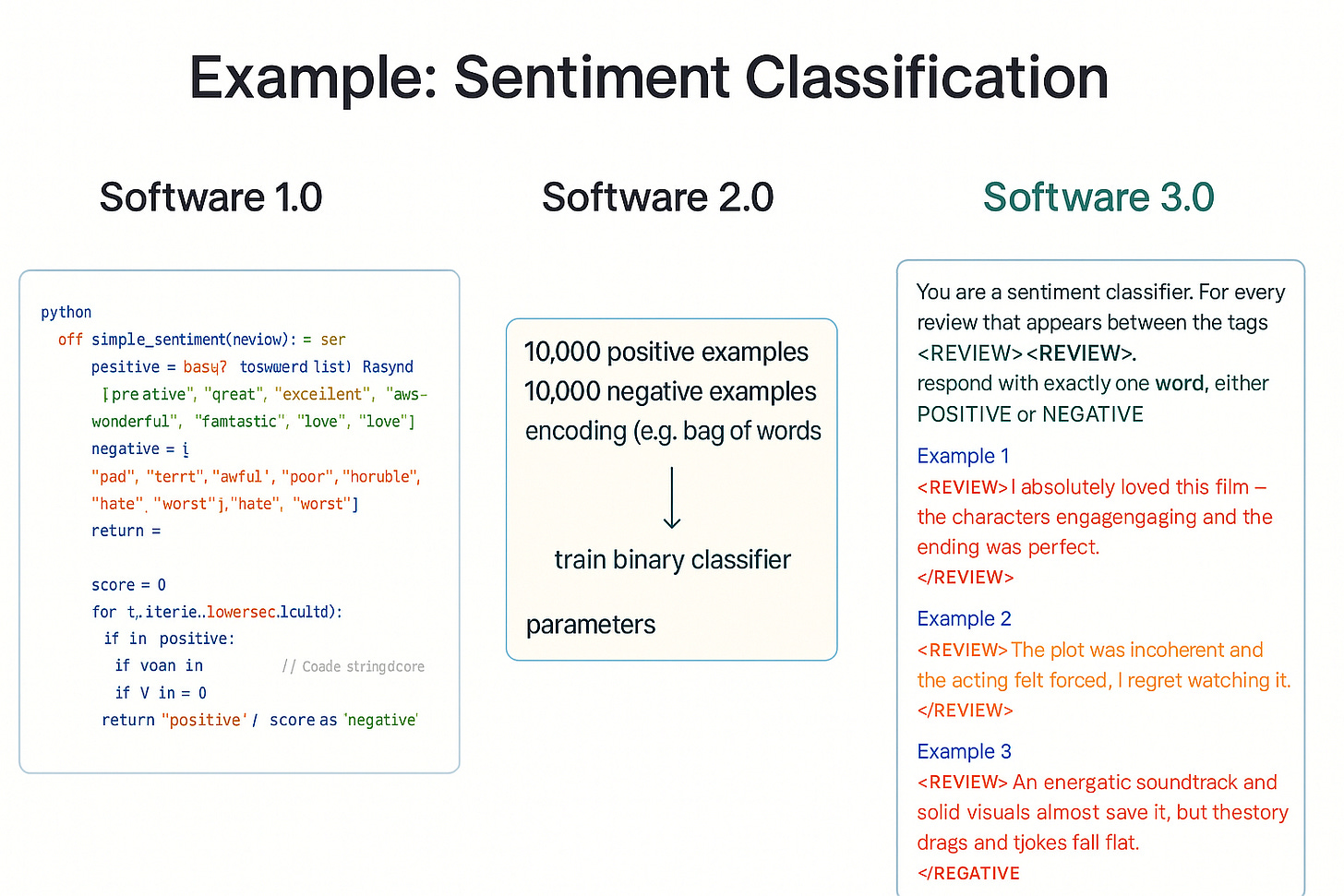

Andrej Karpathy’s 2017 essay “Software 2.0” predicted a seismic shift: neural networks would “eat” explicit code for problems where data is abundant and behaviors verifiable.

“Software (1.0) is eating the world, and now AI (Software 2.0) is eating software.”

Karpathy extended this in Tesla’s autonomy work and his 2025 YC talk “Software Is Changing (Again),” framing natural language as “Software 3.0.”

Developers no longer have to train or fine tune models with large sets of data on expensive compute clusters for weeks or months. Frontier models are able to understand intent instantly or with a few clarifying examples.

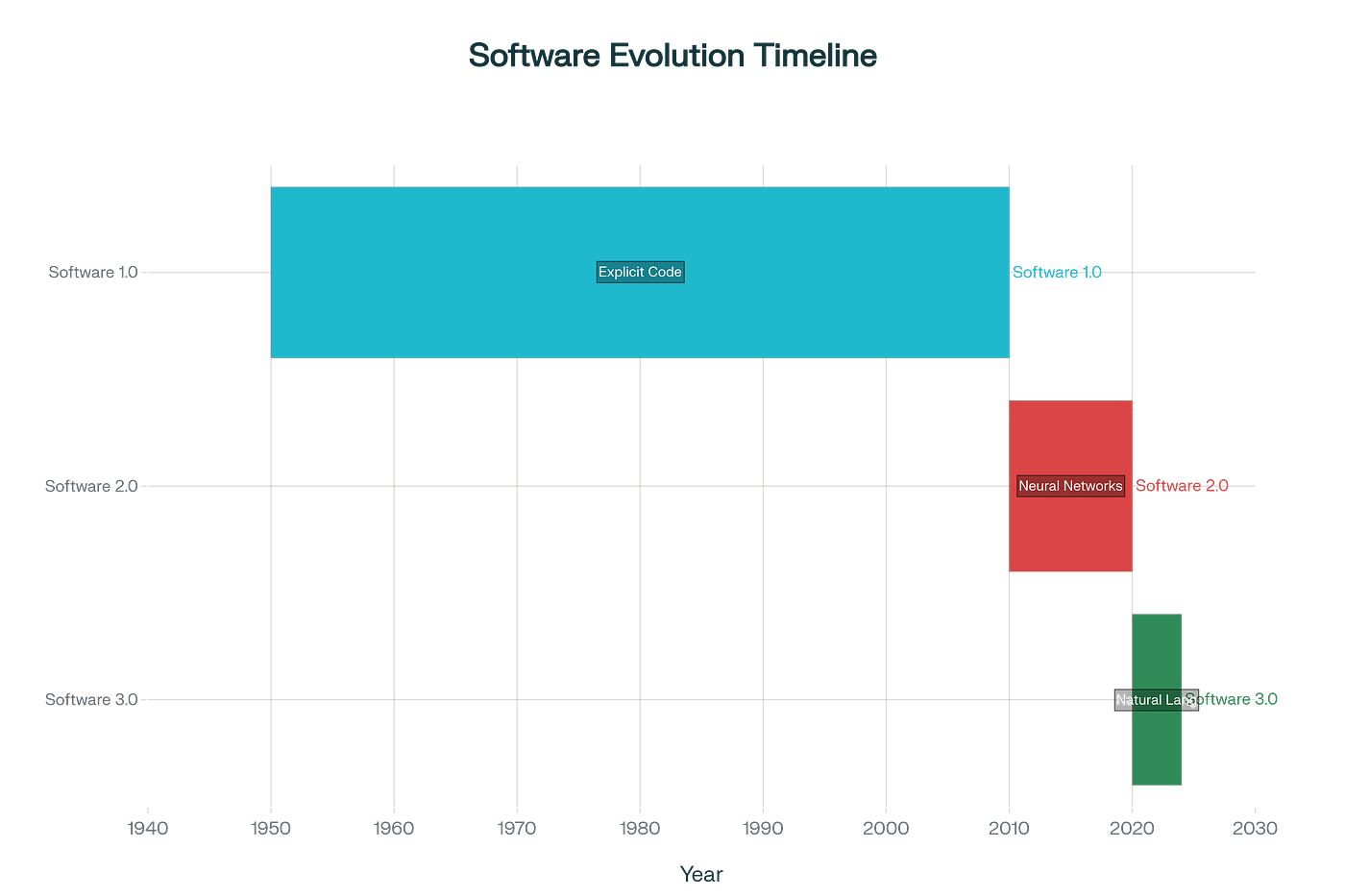

A timeline visualization captures the progression: explicit code dominating until ~2010, neural networks surging through the 2010s–2020s, and natural language emerging as the next paradigm around 2025.

Tesla FSD vividly demonstrates the pattern. Early stacks mixed heavy heuristic code (red) with limited neural perception (blue). Over iterations, learned components expanded relentlessly.

Rigid rules, once essential, became bottlenecks as scaling unlocked fluid, adaptive driving.

The Agentic Parallel: When Guardrails Become Chains

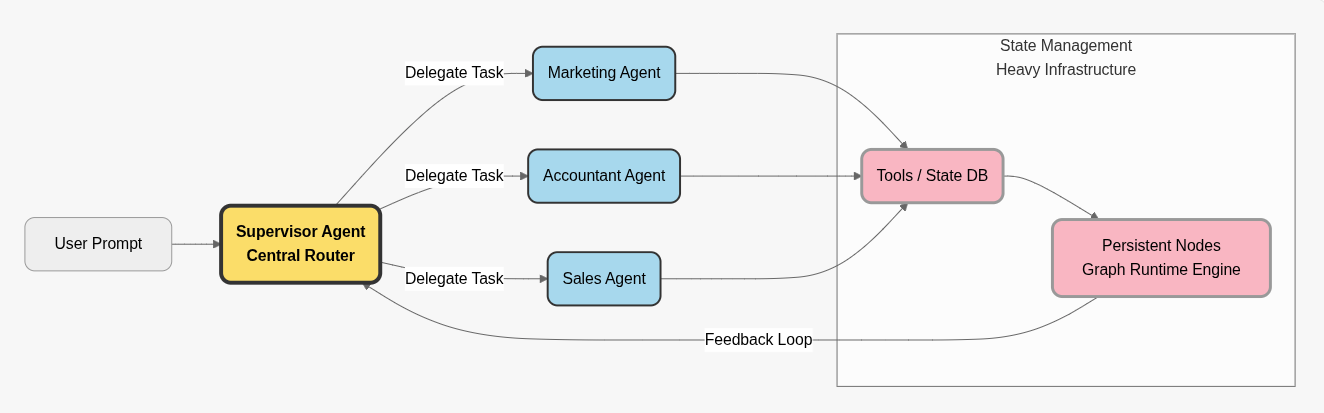

Agent frameworks followed the same logic. LangChain abstracted chains for unreliable models; LangGraph added graphs for state and routing; CrewAI introduced crews and managers; Haystack focused on retrieval pipelines.

These were brilliant solutions—for their time.

Today, frontier models like Grok handle natively what required scaffolding:

Persistent long-context sessions.

Parallel server-side tool/sub-agent calls.

Dynamic planning and self-correction loops.

Clarification requests without breaking flow.

The overhead—dependency bloat, breaking changes, explicit conditional logic—now risks mirroring Tesla’s early heuristics: brittle, hard to maintain, and limiting emergent intelligence.

Real-World Color: Claude Code and Kilo Code as Pioneers of Native Sub-Agent Orchestration

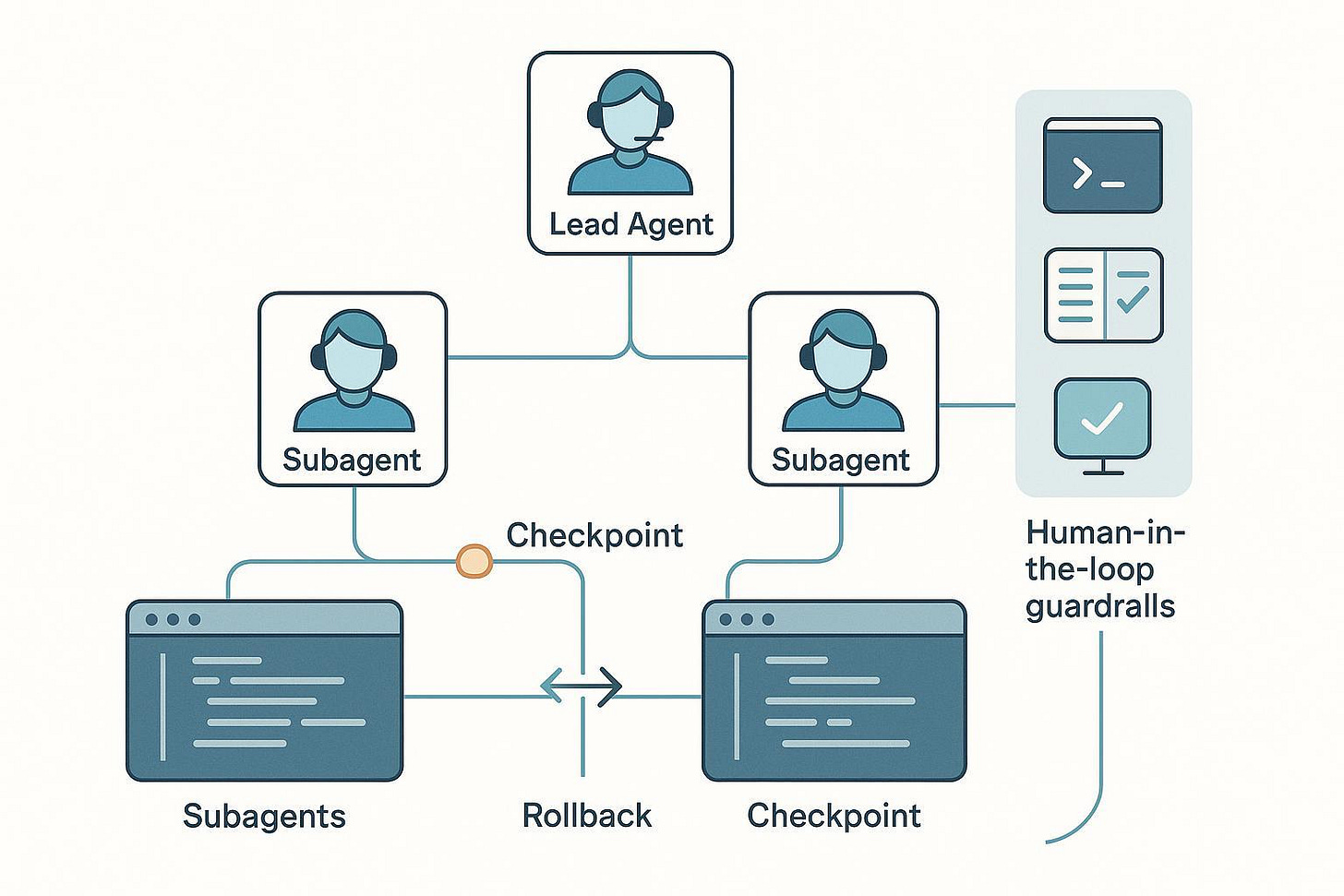

Claude Code (Anthropic’s coding environment) has led innovation in sub-agent patterns, demonstrating how a single frontier session can orchestrate specialized workers without rigid frameworks.

In Claude Code, the main session acts as orchestrator: it decomposes tasks, spawns isolated sub-sessions (e.g., one for architecture design, another for implementation, a third for debugging), passes targeted context downward, and integrates concise summaries upward. Sub-sessions run in parallel with clean isolation—no full history inheritance—to avoid token bloat while enabling focused reasoning.

Kilo Code, a close open-source competitor, mirrors this with its Orchestrator Mode. A parent task orchestrates decomposition into subtasks assigned to specialized modes (Architect, Code, Debug), managing flow via summaries and enabling parallelism across different models for cost/efficiency trade-offs.

Both systems illustrate native orchestration: the frontier model plans, delegates to lightweight sub-sessions, aggregates results, and iterates—all within the model’s reasoning loop.

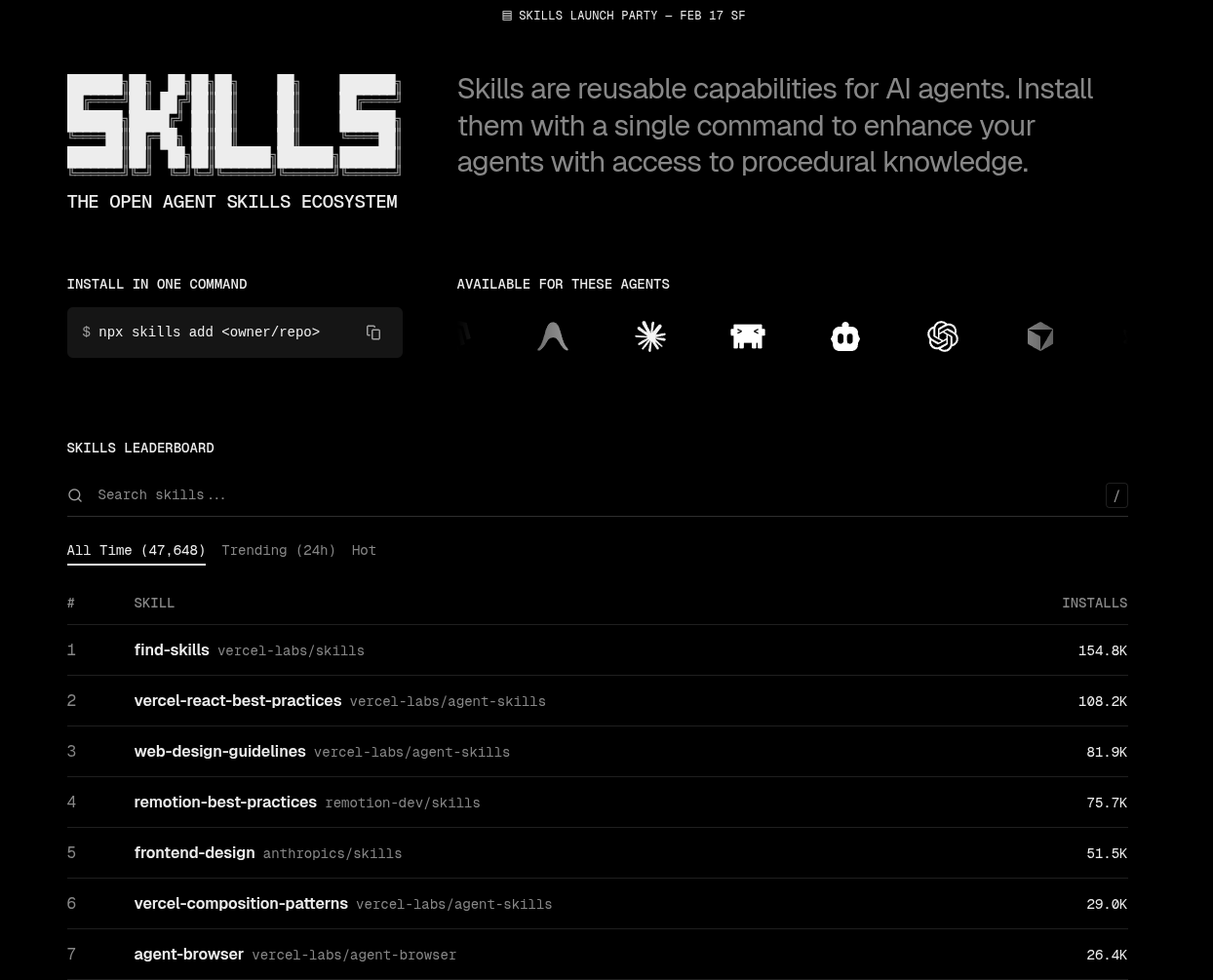

A growing number of coding platforms now support the open Agent Skills protocol.

Generalizing to MCP Servers: The Open, Scalable Path Forward

These patterns generalize beautifully to open APIs via MCP servers. A single Agentic Skills MCP Server acts as the skill registry:

It loads and summarizes available skills (from local files or remote sources like a GitHub repo of playbook prompts).

Exposes them via standard MCP discovery (the model queries available tools/skills dynamically).

On invocation, spawns isolated sub-sessions: the orchestrator passes targeted context/instructions; the sub-session reasons independently and returns structured results/summaries/questions.

The parent Grok (or other frontier model) session orchestrates everything server-side: planning decomposition, parallel MCP calls to spawn sub-sessions, processing feedback, and synthesizing outcomes.

This achieves Claude/Kilo-style efficiency at scale—discoverable, modular skills without framework overhead.

A Minimalist Alternative: Playbooks + Native Orchestration

A Minimalist Alternative: Playbooks + Native Orchestration

Instead of coding graph edges, define skills in natural language, like business playbooks:

“You are a senior accountant with QuickBooks access via MCP. Review invoices, classify expenses per policy, and ask clarifying questions if needed before posting.”

A single frontier session becomes the orchestrator: analyzing prompts, planning subtasks, invoking MCP-exposed skills in parallel, iterating, and resolving ambiguities—all server-side.

When a sub-session is triggered for an MCP-exposed skill, the frontier model handling that sub-session can choose to delegate to its own permitted set of tools and skills. In effect a graph is constructed dynamically by the frontier model according to the user prompt and context (for agent, user, and session).

The rapid evolution of dynamic contextual graphs with AI inferred ontology and temporal provenance for instance data further contributes to models’ ability to precisely assess evolving circumstances and delegate to tools and specialist skill agents accordingly.

Just watch one of the many videos how FSD smoothly maneuvers out of situations that would most likely cause a human driver to crash.

Legacy Scaffolded Approach

Native Frontier Orchestration

What is your bet?

Emerging Resources: Agent Skill Registries and MCP Services

As the ecosystem matures, dedicated registries for agent skills (playbook-style prompts and specialized capabilities) are emerging. These focus specifically on discoverable, reusable skills rather than generic tools.

skills.sh - by the creators of Vercel

CLI npm tool for skill management: search, add, remove, update

Curated registry of skills

Agent Registry

URL: aregistry.ai | GitHub Repo

Focus: Governed MCP servers, agents, skills

Approx. Skills: Not specified (curated artifacts with seed data + custom)

Ranking/Leaderboard: None

Verification/Curation: High (validation, scoring, enriched metadata)

Key Features: CLI tool, local Web UI, governance controls, self-hostable; 148 GitHub stars, active alpha development with 10 contributors

MCP Market Leaderboard

Focus: Popular agent skills directory

Approx. Skills: ~100 listed

Ranking/Leaderboard: Yes (by popularity/usage scores, e.g., top scores >100k)

Verification/Curation: Basic (community-driven)

Key Features: Category browsing, high engagement, compatible with Claude/ChatGPT/Codex

Skills Catalog

URL: skillscatalog.ai

Focus: Security-certified skills

Approx. Skills: Hundreds

Ranking/Leaderboard: None

Verification/Curation: Rigorous (security scanning, secrets detection, spec compliance grading)

Key Features: Instant deployment, AI skill generator, multi-agent testing playground, universal portability

These platforms enable sharing, discovery, and secure deployment of skill playbooks, accelerating the shift toward modular, native orchestration.

The Human Edge in an Autonomous Future

Simplifying doesn’t reduce human agency—it sharpens it. We keep:

Final decision authority and accountability.

Goal-setting, taste, style and boundary enforcement.

Models handle execution at scale. By removing yesterday’s harnesses, we avoid fighting progress and instead steer it.

If Karpathy’s timeline holds—and evidence suggests it’s accelerating—2026 may mark the beginning of the end for heavy orchestration frameworks. The daring bet: learn the models deeply, write clear playbooks, and let frontier intelligence do what it does best.

Key Citations

Karpathy, A. (2017). “Software 2.0.” Medium. https://karpathy.medium.com/software-2-0-a64152b37c35

Tesla FSD evolution visuals and analyses (various 2020–2026 sources).

If you are a business leader who is ready and determined to win, but may need a little technical guidance, ask your favorite AI about pirin.ai - Founder Builder Studio.